Lewis, Fallis, and Fitelson on accuracy and scientific progress

In a terrific recent paper in Ergo, ‘Accuracy-First Epistemology and Scientific Progress’, Peter Lewis, Don Fallis, and Branden Fitelson (henceforth, LFF) provide the latest version of an argument against accuracy-first epistemology that originates in Fallis and Lewis’ 2016 paper and received another recent update in their 2021 paper. According to this argument, various putative ways of measuring accuracy that are used in the accuracy-first programme are not good ways of measuring that quantity, because they have the consequence that, in many historical cases of scientific progress, the accuracy of the scientists’s credences drops, whereas we would clearly like to say that it rises.

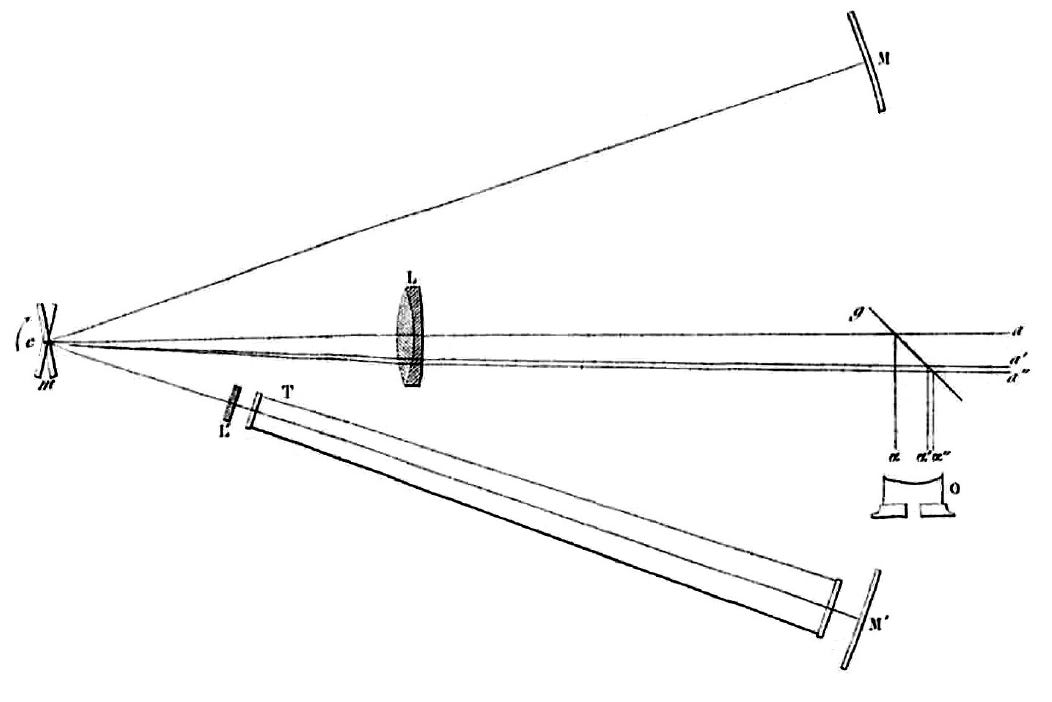

LFF describe the Michelson-Morley experiment concerning electromagnetic ether, Léon Foucault’s rotating mirror experiment concerning the speed of light in different media, Semmelweis’s hand-washing experiments concerning the cause of childbed fever, and the experiments that ruled out the MMR vaccination as the cause of autism. In each case, they say, the experiment ruled out a false hypothesis, but when the scientists reduce their credence in that hypothesis to zero, and update their credences in two remaining hypotheses by increasing them both by the same factor, as the Bayesian demands they do, they are left with credences that are less accurate than the credences they had before the update, according to certain measures of accuracy that accuracy-first epistemology tends to favour. Updating as the Bayesian says they should on the falsity of a false hypothesis makes them less accurate than they were. This, they suggest, is so contrary to our intuitive reaction to these cases that it rules out these measures as good explications of the concept of the accuracy of credences.

Let’s see the one of these cases in action. Suppose you have three hypotheses:

Hypothesis 1: there are environmental causal factors for autism, but the MMR vaccination is not one of them.

Hypothesis 2: there are no environmental causal factors for autism.

Hypothesis 3: MMR vaccination is a causal factor for autism.

LFF say that Hypothesis 1 is the true hypothesis, and I’ll assume that here. Prior to the experiments that conclusively ruled out Hypothesis 3, a well-informed scientist would nonetheless have given very low credence to it, since they would have appreciated that the study that claimed to establish it was small and flawed in obvious ways; so it was a possibility for them, but they’d been given no reason to assign very high credence to it. So let’s suppose they assigned credences 0.45, 0.5, and 0.05, respectively, to Hypotheses 1, 2, and 3. And now suppose they learn of the experiment that conclusively rules out Hypothesis 3. So now they drop their credence in that to 0, and raise their credences in Hypotheses 1 and 2 by the same factor so that they sum to 1: i.e. their new credences are 0.473… (i.e. 0.45/(0.45+0.5)), 0.526… (i.e. 0.5/(0.45+0.5)), and 0. Then, according to the so-called Brier score, their credences beforehand have accuracy 0.815, while their credences afterwards have accuracy around 0.805.1

How might the accuracy-firster respond to this? We might agree that LFF’s argument rules out the Brier score as a good measure of accuracy; or we might continue to think it’s a good measure of accuracy, but view LFF’s argument as showing that accuracy is not the only source of epistemic value, and so the Brier score is not a good measure of epistemic utility. In that case, we might seek out an alternative measure of accuracy, or epistemic utility more generally, that does everything the accuracy-firsters want it to do, such as underpin arguments for Probabilism and Conditionalization, but also ensures that, in the cases from the history of science that LFF present, the scientists count as learning.

LFF express pessimism about this strategy, but I think there are reasons for optimism, and that’s what I want to describe here. To see why I’m optimistic, it helps to think about an insight that Ben Levinstein provides in his wonderful paper, ‘A Pragmatist’s Guide to Epistemic Utility’, building on earlier work by Mark Schervish. I’ll sketch Levinstein’s idea, because it is worth appreciating in itself, and then I’ll show how we might use it to answer LFF’s worry.

We use our credences to represent the world; but we also use them to guide our actions. In accuracy-first epistemology, we have typically been concerned with the former role. However, Levinstein shows that, in a certain sense, the measures we use to evaluate credences for their success in representing the world are the same measures we use to evaluate them for their success in guiding action: in both cases, it’s the strictly proper scoring rules.

Inspired by a suggestion by Mark Schervish, the idea is very roughly this. Given a set of credences, a decision problem, and a way the world might be, we can say what the utility is, at that world, of having those credences when you face that decision problem. It is the utility, at that world, of whatever option those credences would lead you to pick from those available in that decision problem. So the utility of a set of credences is the utility of the option they guide you to choose.

Now suppose you’re uncertain what decisions you’ll face with your credences. So, you place a probability distribution over all the possible ones. Then we can, for a given way the world might be, say what the expected utility of those credences is: it is the expectation, relative to the probability function over all the possible decision problems, of the utility, at that world, of those credences when they face whatever decision problem they’ll face. Levinstein proposes that we evaluate the credences using this expected utility. And he shows that, if we do so, then the measure of their pragmatic, action-guiding value that it gives is a strictly proper scoring rule. What’s more, any strictly proper scoring rule can be recovered in this way. That is, for any such scoring rule, there’s always some probability distribution over the possible decision problems such that the expected utility of some credences relative to that distribution matches the score of those credences relative to the scoring rule.

Let’s see this in action. Suppose you know you’ll face a decision of the following form: there are two states of the world, and there are two options; the utility of the first option is 0 at the first state and t at the second, for some t between 0 and 1; the utility of the second option is 1-t at the first state and 0 at the second.

But suppose you’re otherwise completely uncertain about which decision you’ll face, and so you place a uniform distribution over the possible values of t, which ranges from 0 to 1. Then it turns out that the expected utility of assigning credence p to the first state of the world and 1-p to the second is essentially the Brier score of those credences.2 That expected utility can be viewed as our best estimate of the utility of those credences from our position of ignorance about the decision problem we’ll face with them; or it can be viewed as a sort of average performance of those credences as guides to action.

Now, that’s quite a specific case: we have credences in just two possibilities; and we know exactly the form of the decision problem we’ll face, but nothing more. But Levinstein’s approach generalises. Suppose we have credences over three states of the world, as do the scientists in LFF’s examples. We know that we’ll face a choice between two options defined over those three states of the world, but we know nothing more than that. And so we place a uniform distribution over the possible decision problems; and, for each possible world, we take the expected utility, at that world, of the option those credences lead you to pick. Doing this, we again get a strictly proper scoring rule. Since it’s a strictly proper scoring rule, we can use it to give the accuracy-firster’s arguments for Probabilism and Conditionalization.3 But, what’s more, it turns out that, for any assignment of credences to the three worlds, learning evidence that rules out one of the worlds and conditioning on it leads to credences that are strictly more accurate by the lights of this strictly proper scoring rule at any of the remaining worlds. And that’s exactly what LFF demand.

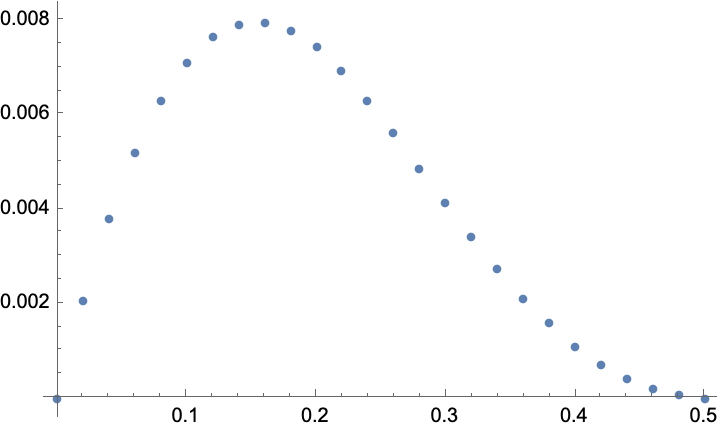

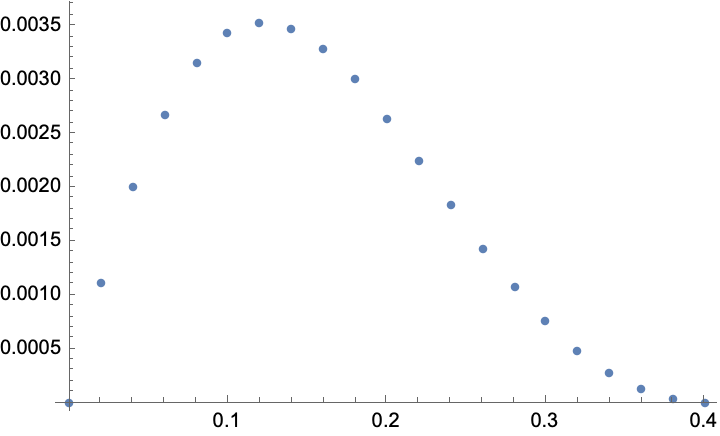

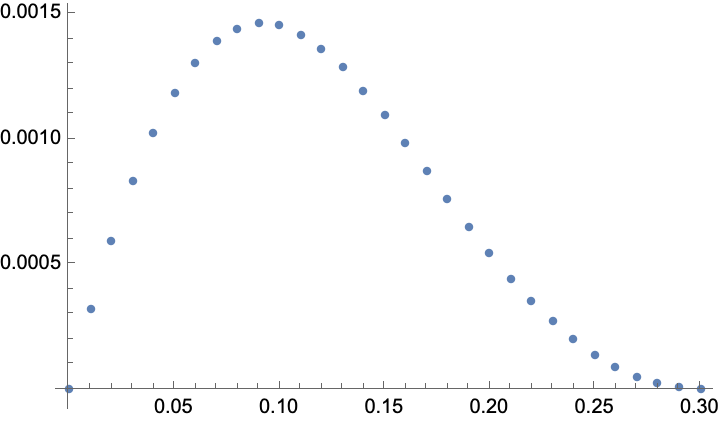

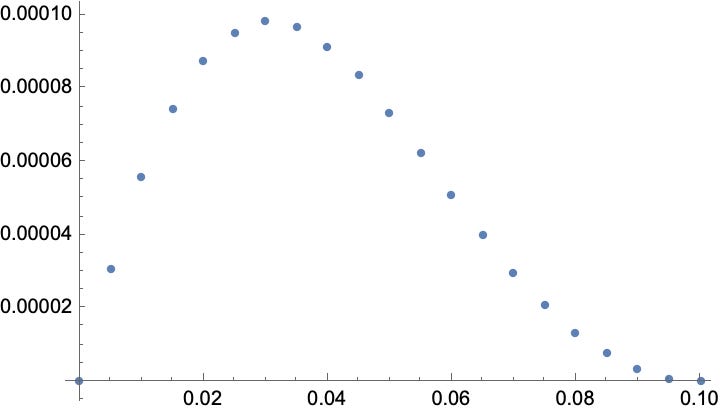

In the series of plots below, we see, for different credences (p1, p2, p3) in three possibilities, the accuracy, at the first possibility, of those credences subtracted from the accuracy, again at the first possibility, of the posterior credences (p1/(p1+p2), p2/(p1+p2), 0) that are obtained by ruling out the third possibility. As we can see, the value is always positive, which means that the posteriors are always strictly better than the priors, as LLF require.

Appendix: derivation of Brier score

The expected pragmatic utility, at World 1, of assigning credence p to World 1 and credence 1-p to World 2 is 0 if p < t < 1, since Option 1 maximizes expected utility in that case, and it is 1-t if 0 < t < p, since Option 2 maximizes expected utility in that case. And so the expected utility, at World 1, is this:

The expected pragmatic utility, at World 2, of assigning credence p to World 1 and credence 1-p to World 2 is t if p < t < 1, since Option 1 maximizes expected utility in that case, and it is 0 if 0 < t < p, since Option 2 maximizes expected utility in that case. And so the expected utility, at World 2, is this:

The Brier score of credences p1, p2, p3 in three exclusive and exhaustive possibilities, where the ith possibility is true is:

I’ll pop the proof in a little appendix at the end, since it’s a nice illustration of what’s going on.

There are some technicalities to deal with here. As it stands, this definition gives a strictly proper score over the probabilistic credences. To secure the arguments for Probabilism and Conditionalization, we need to extend it continuously to the non-probabilistic credences. But there are many ways to so extend it. The arguments for Probabilism that depend on strictly proper scoring rules begin with Savage and then Predd, et al.; I generalized them to the sort of case we have here, and Michael Nielsen generalized them still further, giving the most advanced result in the area. One of the arguments for Conditionalization is given by Hilary Greaves & David Wallace. The other began with me and Ray Briggs, and then Michael Nielsen corrected our proof and extended our result, again giving the most advanced result in the area.

Is self-promotion allowed in the comments? :-) My collaborators and me have some results on elimination counterexamples to report which might be relevant.

What does the scoring rule look like that you are working with in the three world, uniform distribution over decisions case? It's been a while since I've read any of the Lewis and Fallis things, so I thought they had been using an argument that ruled out any scoring rule other than the logarithmic one, but I suspect that this isn't the logarithmic rule you are getting here.